SyMTRS: Synthetic Multi-Task

Remote Sensing Dataset

arXiv 2026

1TOELT LLC AI lab

2HSLU (Lucerne University of Applied Sciences and Arts)

3armasuisse S+T

Abstract

Monocular Depth Estimation

We provide pixel-perfect depth ground truth exported directly from UE5. Below are interactive point cloud visualizations reconstructed from our monocular depth maps, showcasing the high-density architectural detail preserved in our captures.

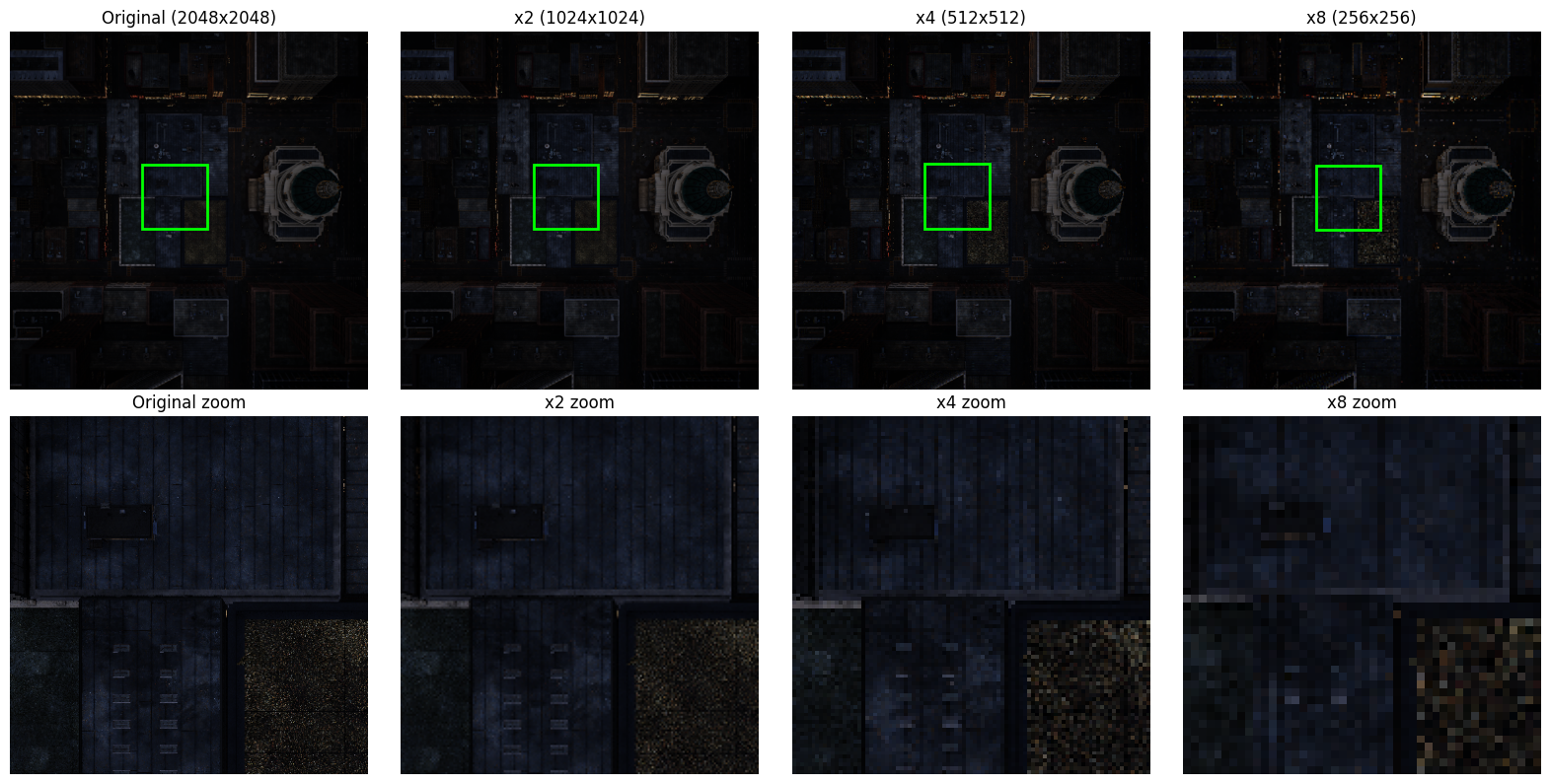

Super-Resolution (x2, x4, x8)

SyMTRS includes multi-scale paired imagery. Below is a full-resolution comparison showing the ground truth 2048x2048 RGB against various downsampled levels, optimized for training robust SR models.

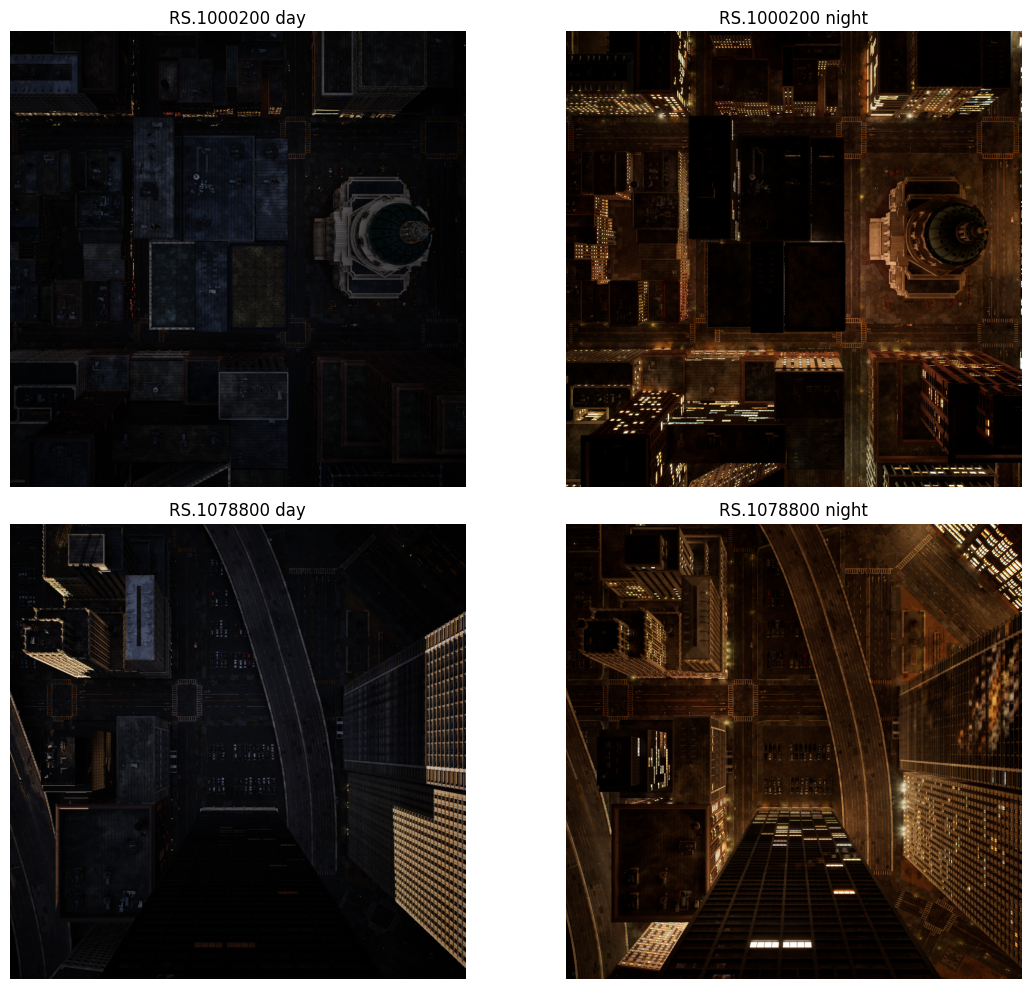

Domain Adaptation (Day to Night)

Enabling zero-overhead data generation for day-to-night translation. Our dataset features perfectly aligned imagery across divergent illumination cycles, critical for robust 24/7 autonomous monitoring.

Full-Resolution Comparison

The MatrixCity simulation environment allows for deterministic camera placements with variable astrophysical time, facilitating the creation of large-scale domain adaptation benchmarks.

Citation

If you use FusionVision in your research, please cite the following paper.

@misc{elghazoualisymtrs,

title={SyMTRS: Benchmark Multi-Task Synthetic Dataset for Depth, Domain Adaptation and Super-Resolution in Aerial Imagery},

author={Safouane El Ghazouali and Nicola Venturi and Michael Rueegsegger and Umberto Michelucci},

year={2026},

eprint={2604.21801},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2604.21801},

}